|

In between extended discussion about current news and sixty-second scrambles to the sixth floor for free food, I am excited to report that my adventures as an intern at CSIS continue to teach me what the world of strategic studies entails. These past two weeks since my last post have been incredible, as I have been exposed to even more defense industrial work and analysis than ever before.

This past week, the CSIS DIIG team was the proud host of a fourth workshop in defense-related artificial intelligence- a fantastic experience which I feel I learned a lot from. The conference brought together leaders in artificial intelligence from around the defense community to debate the ethical, strategic, and security-based repercussions of certain decisions in AI. Previous workshop topics also included discussions as to what constitutes as artificial intelligence, and distinguishing machine learning from AI. One of the debates I have found most interesting is that relating to the accountability of AI decisions. It is no lie that the world is facing a crisis of accountability as seen in the new pertaining to our nation’s current administration. To use some media jargon, this “post-truth” world we live in poses some major debates about how consequences are to be delivered to a guilty party. However, this debate has a reserved spot in AI-related discussions as well. As we’ve seen with recent events involving Facebook and Google, there is a desire to blame poor decisions on software as a means to escaping accountability for privacy violations and misconduct. Yet, is there any truth in blaming poor decisions on software instead of its human creators? As someone who studies the mathematical modeling of human decision theory, there exists an undeniable overlap with artificial intelligence (an overlap I may indeed choose to pursue as a career one day). As I’ve mentioned in previous posts, I have fallen in love with the concept of existentialism as it applies to decision theory. Existentialism suggests that human existence precedes essence, essentially (haha) meaning how we choose to live our lives defines our identity more than what we are initially are born into with respect to self-actualization. With this in mind, I cannot help but argue that if a machine is created to makes its own decisions, then its identity is effectively at fault for making said decision. However, an important note on this discussion is the difference between conceptualizing an action and actually performing said action. A decision is essentially the exact moment that a though enters reality- it is the bridge between the conceptual and the actual world- the exact moment a behavior is born. However, most AI in defense (currently) are not the ones acting upon their decision- they are merely theorizing actions. With this in mind, it is the individual who carries out the decisions (therefore, the one who turns the conceptual action into an actual action) that remains at fault and is deserving of consequences- in this case, humans. However, when it comes to creating AI that acts upon its own decision, the line gets fuzzier and is worth of the intense discussion it is receiving. Can we train an AI to recognize the difference between good and bad decisions? What is the format of an ethical decision? And are ethics an essential quality or one we learn by existence? All are important philosophical questions that need answering before AI is to be used as a weapon. So as you can imagine, there have been some heavy-hitting discussions here at CSIS, some very early in the morning! But hey- that’s what coffee is here for, right? Speaking of heavy-hitting, this past week I was also introduced to the strange but amazing world of government/corporate softball- a D.C. tradition I was more than happy to participate in. I guess that my one semester as an outfielder for the 7thgrade softball team DID help me out in the long run! At the corporate picnic last Friday, the Whippersnappers faced off against the Geezers (30+ - I guess I’m 11 years away from being a geezer!) in an intense match. While not as intense as the supposedly mythical game between CIA and FBI teams, the competition was tough. However, while the Geezer team unfortunately beat us Whippersnappers 10 to 7, I was proud to play catcher for a single inning. In terms of other exciting news, the ISP’s space defense team next door to DIIG held a conference earlier this week with one of the big attendees being Buzz Aldrin. While DIIG interns were not invited to attend the event, it was exciting to be just 4 floors above the space legend (and hear the space defense intern’s tales of him supposedly punching a conspiracy theorist in the Facebook in a YouTube video). Furthermore, Northrop Grumman came in the other day to teach the team more about the engineering behind stealth aviation- an aerodynamic lesson one could only dream about! Finally, I continue to learn more from my mentor about Japanese-American international relations, and hope to get some tips on learning Japanese before school starts this fall! All in all these past weeks continue to be an incredible learning experience. As I walk past the White House every morning, waving at the nearby secret service dogs, I cannot help but think about how thankful I am to be learning from the best of the best in DC about the field I love the most- even if the weather lately HAS been more of a monsoon season!

0 Comments

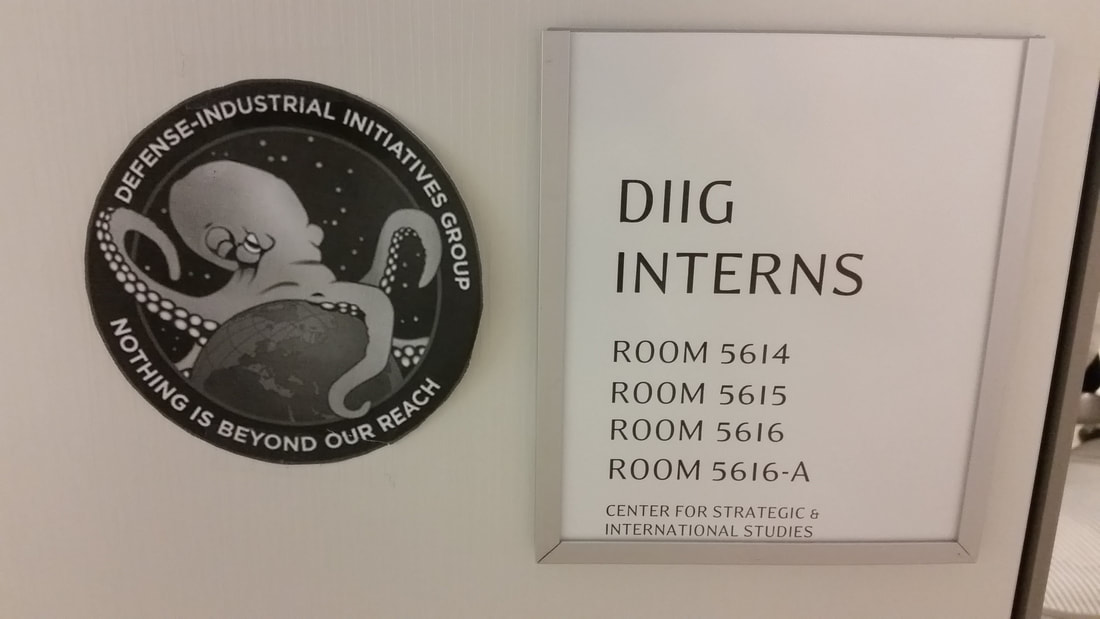

This past week, I began work at CSIS, enjoying my first taste of think-tank and office life. From coffee-stained CIA mugs to ominous intern insignias (pictured above), I find myself quite at home in the Defense Industrial-Initiatives Group (DIIG). To say the least, I am fascinated by think-tank life. I had no idea that a place where research both political and statistical in nature could live in such perfect harmony in such a creative and open-minded matter. Housed on the 5th floor right down the hall from Space Defense (Space force, anyone?), I have found a place that studies strategy from a mathematical standpoint for genuinely the first time in my academic life. While copyediting, outlining, and taking notes in meetings might seem boring when mentioned on this blog, I have been given the incredible honor of reading through some of the most creative and insightful works of statistical defense analysis I have ever encountered. As I continue to adjust to this new environment and learn from the others around me, I only hope that the discoveries I make on a day to day basis continue- so bear with me as I record some of the more prominent memories of this first week!

|

Mac Gagne

Statistics, Mathematics, Decision Science, Game Theory, Psychology, Political Science, War Gaming. ArchivesCategories |

Proudly powered by Weebly

RSS Feed

RSS Feed